Model Distillation

Slash Inference Costs & Latency

Are you a developer looking to reduce LLM costs and latency whilst maintaining output quality? Model distillation is the most effective way to optimise your app’s AI calls.

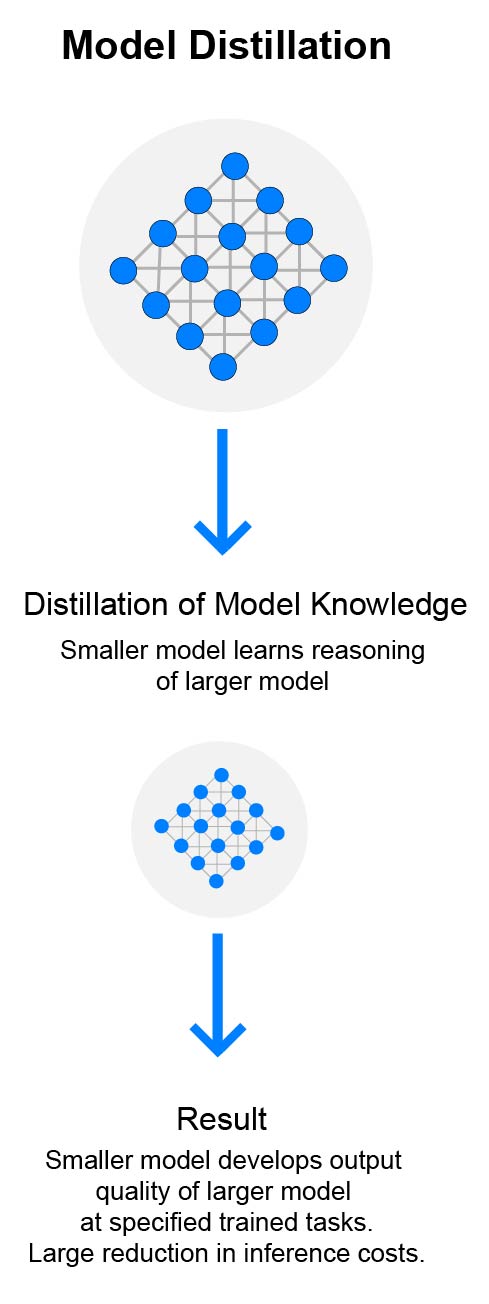

What is Model Distillation?

Model distillation is the process of tuning a smaller LLM on outputs from a larger one. As a result of distillation, the smaller LLM acquires the reasoning patterns of the larger at specified trained tasks while requiring a fraction of the compute and memory. The end result is lower token costs and lower latency/faster response, all at a fine-tuned and validated high level of performance.

What are the cost savings?

In production deployments, model distillation typically reduces inference costs by 5x to 20x, while preserving high-quality performance on the target tasks.

Inference Cost-per-Token Savings

5x-20x

When should we use distillation?

Model distillation is best suited when:

- The task is clearly defined: The model is solving a specific, repeatable problem with a familiar input and output.

- There is a high-quality teacher model: A strong model (or human experts) can generate consistent, reliable outputs for the student model to learn from.

- Outputs are structured or predictable: Tasks like classification, extraction, or structured generation (e.g. JSON) distill especially well.

- Consistency matters more than creativity: Distilled models excel when the goal is reliable, standardized outputs—not open-ended generation.

- Latency and cost are production constraints: Distillation shines when you need fast responses and efficient scaling.

Who are OptimumPartner AI?

OptimumPartner AI is an end-to-end fine tuning data company which specializes in model distillation and fine-tuning services, serving English speaking markets across North America and Europe.

What’s the Process?

- We engage with you via call to gain a scope of what your project/app requirements are.

- We create a dataset of outputs from a larger more performant model.

- We distill the larger model outputs into a smaller model via fine-tuning.

- We create a ‘validation’ data set and test the new model’s performance against it.

- We iterate on the original large model dataset and feed it into a new fine tune program until performance level is reached with the smaller model.

- The result: large model performance for specified tasks at a fraction of the compute cost and latency.

Let’s Talk

Let’s discuss your application and we will provide you with an insight into the cost savings and performance improvements that you can achieve via model distillation. Visit our contact us page to submit some brief details about your project and let’s set up a call at your convenience.

Company Snapshot

Registration UK # (13226975)