Why do we need to add Domain Knowledge to LLMs?

LLMs during their training phase are trained from a broad array of sources and have a knowledge cut off long before model release to the public. They often do not include proprietary knowledge or have up to date knowledge at inference time.

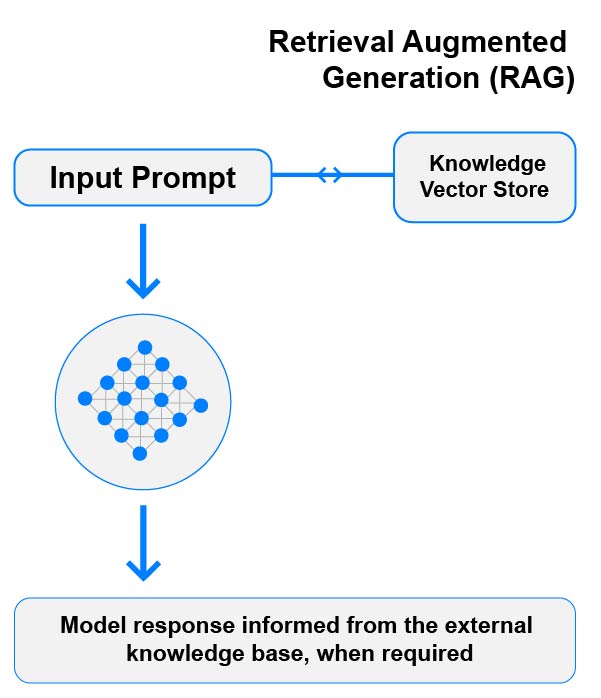

How We Add Knowledge to a Model

We add knowledge to the model by utilising a vector store. The vector store takes your documents, turns them into ‘chunks’ and vectorizes the chunks to produce a vector representing its semantic meaning in a high-dimensional vector space. Before inference time, your backend can check the prompt against the vector store via a Cosine function to find any relevant chunks of stored data and include them within the prompt, allowing the LLM to interpret the data before formulating its response. This is known as Retrieval Augmented Generation or RAG.

Let’s Talk

Discuss your use case and determine whether fine-tuning is the right approach. Visit our contact us page to submit some details about your project and let’s set up a call.

Company Snapshot

Registration UK # (13226975)